AI Part 2: Terminology

Here is a brief list of common terms used in AI with simple but accurate definitions. Be aware that while they have precise meanings, many of these terms are used interchangeably by authors on the internet.

The list itself will be presented in two versions: topical and alphabetic.

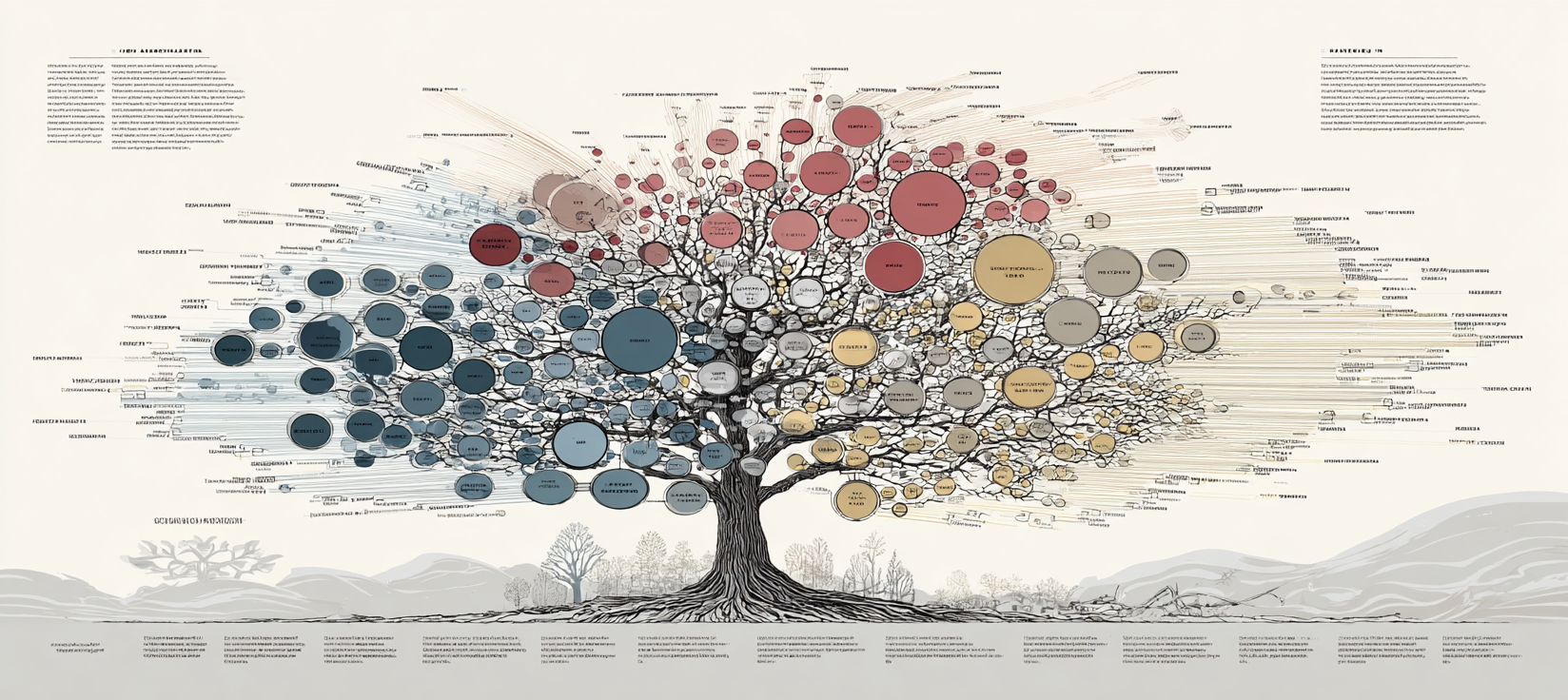

Topical List

To aid with introducing new terminology this version of the list is presented in a topical order that avoids forward references (definitions will only use words defined previously in the list).

- Artificial Intelligence (AI): An umbrella term covering all technologies that attempt to have a computer think like a human

- Symbolic AI: Logic and rules about how to think about ideas, programmed by humans

- Statistical AI: Programs that identify patterns in data and use them to make predictions

- Neural AI: Complex structures made from simulated neurons that recognize patterns

- Machine Learning (ML): Systems that learn from data rather than being explicitly programmed

- Neural Network: A network made of layers of simulated neurons that mimic some function of the brain

- Activation Function: The artificial neuron itself; a simple equation that determines if a neuron fires (pass on an input signal through the connected synapses)

- Parameters: The collection of numbers that make a given model unique (the arrangement of information)

- Weights: The equivalent of synapses in the brain; what connects to what and how strongly

- Biases: How likely a given artificial neuron is to fire

- Architecture: The types of layers, how they connect and what activation function(s) to use (the arrangement of connectivity)

- Latent Space: The long-term memory of the model which encodes it's knowledge and skills; very efficient and usually incomplete

- Deep Learning: A neural network with many layers

- Model: A specific neural AI (ChatGPT, Claude and LLAMA are example of different neural AIs)

- Training: The process of feeding a neural network training data so it can adjust its internal structure to perform better

- Supervised Learning: Uses structured data for which the correct answers are known; like a classroom textbook with all the answers in the back

- Reinforcement Learning: Asks the model questions or allows it to try by itself; like learning through trial and error with a teacher saying 'right' or 'wrong'"

- Unsupervised Learning: Provides data without explicit context, allowing the model to figure out what is important on its own (like independent research for a school paper)

- Prompt: A question or instruction posed to a model, usually by a human

- Inference: The process by which a model responds to a prompt

- Generative AI: An AI that produces new content (text, audio, visual, multi-media)

- Large Language Model (LLM): A deep learning model trained on a large amount of text to predict and generate human-like language

- Context Window: The short-term, working memory of an LLM

Alphabetical List

Here is the same list in again in alphabetical order for easy reference in future:

- Activation Function: The artificial neuron itself; a simple equation that determines if a neuron fires (pass on an input signal through the connected synapses)

- Architecture: The types of layers, how they connect and what activation function(s) to use (the arrangement of connectivity)

- Artificial Intelligence (AI): An umbrella term covering all technologies that attempt to have a computer think like a human

- Biases: How likely a given artificial neuron is to fire

- Context Window: The short-term, working memory of an LLM

- Deep Learning: A neural network with many layers

- Generative AI: An AI that produces new content (text, audio, visual, multi-media)

- Inference: The process by which a model responds to a prompt

- Large Language Model (LLM): A deep learning model trained on a large amount of text to predict and generate human-like language

- Latent Space: The long-term memory of the model which encodes it's knowledge and skills; very efficient and usually incomplete

- Machine Learning (ML): Systems that learn from data rather than being explicitly programmed

- Model: A specific neural AI (ChatGPT, Claude and LLAMA are example of different neural AIs)

- Neural AI: Complex structures made from simulated neurons that recognize patterns

- Neural Network: A network made of layers of simulated neurons that mimic some function of the brain

- Parameters: The collection of numbers that make a given model unique (the arrangement of information)

- Prompt: A question or instruction posed to a model, usually by a human

- Reinforcement Learning: Asks the model questions or allows it to try by itself; like learning through trial and error with a teacher saying 'right' or 'wrong'"

- Statistical AI: Programs that identify patterns in data and use them to make predictions

- Supervised Learning: Uses structured data for which the correct answers are known; like a classroom textbook with all the answers in the back

- Symbolic AI: Logic and rules about how to think about ideas, programmed by humans

- Training: The process of feeding a neural network training data so it can adjust its internal structure to perform better

- Unsupervised Learning: Provides data without explicit context, allowing the model to figure out what is important on its own (like independent research for a school paper)

- Weights: The equivalent of synapses in the brain; what connects to what and how strongly