AI Part 1: A Brief History of Artificial Intelligence

We are currently in the middle of the third AI boom; not the first, and likely not the last. It’s worth looking back at the road that brought us to this point because a) history has a well-known tendency to repeat itself and b) it will help place the current moment in perspective.

Early Days

The idea of artificial neurons themselves were first proposed in 1943 by Walter Pitts and Warren McCulloch, partially based on Alan Turing's groundbreaking work in the 1930s.

The first machine to make use of this novel idea was built in 1951. Marvin Minsky's SNARC was built using 40 vacuum tube-based neurons and was capable of solving simple mazes. In that same year computer programs were also learning to play checkers and chess.

The momentum accelerated in 1956 with the Dartmouth workshop, a meeting of like minded academics interested in "thinking machines" hosted by John McCarthy (who coined the term "Artificial Intelligence"), which established AI as a formal discipline.

1956 also saw the development of the first AI program, “Logic Theorist”. It independently solved 38 mathematical theorems from Principia Mathematica, an important math reference of the day, in one case doing so even more elegantly than the book’s authors. One of those authors, Bertrand Russel, was reportedly delighted to hear he’d been upstaged by the first AI.

Amusingly, the The Journal of Symbolic Logic rejected the creator's paper as lacking notability, somehow missing the fact that the proof's author was not human.

Later that year, at another early AI event, George Miller said "I left the symposium with a conviction, more intuitive than rational, that experimental psychology, theoretical linguistics, and the computer simulation of cognitive processes were all pieces from a larger whole."

Those instincts were well placed. The events of 1956 also laid the groundwork for the emergence of cognitive science in the 1970s; the intersection of Anthropology, Linguistics, Neuroscience, Psychology, Philosophy and Artificial Intelligence.

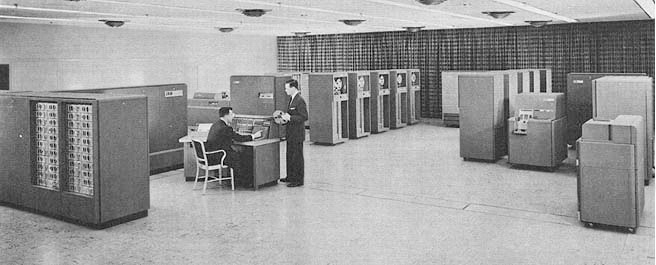

First AI Boom

The amazing developments in the academic sphere soon drew interest from the Department of Defense, which in turn fueled the first AI boom.

Take Joseph Weizenbaum's ELIZA for instance, the first ChatGPT-style chatbot, written in 1966 to simulate a Rogerian psychotherapist. If ChatGPT sent shockwaves through the world upon it's release in 2022, you can imagine the reaction of users 60 years ago. Consider this conversation:

Young woman: Men are all alike.

ELIZA: In what way?

Young woman: They're always bugging us about something specific or other.

ELIZA: Can you think of a specific example?

Young woman: Well, my boyfriend made me come here.

ELIZA: Your boyfriend made you come here?

Young woman: He says I'm depressed much of the time.

ELIZA: I am sorry to hear you are depressed.

Young woman: It's true. I'm unhappy.

ELIZA: Do you think coming here will help you not to be unhappy?

Young woman: I need some help, that much seems certain.

ELIZA: What would it mean to you if you got some help?

Young woman: Perhaps I could learn to get along with my mother.

ELIZA: Tell me more about your family.

Young woman: My mother takes care of me.

ELIZA: Who else in your family takes care of you?

Young woman: My father.

ELIZA: Your father.

Young woman: You are like my father in some ways.

ELIZA: What resemblance do you see?

Young woman: You are not very aggressive but I think you don't want me to notice that.

ELIZA: What makes you think I am not very aggressive?

Young woman: You don't argue with me.

ELIZA: Why do you think I don't argue with you?

Young woman: You are afraid of me.

ELIZA: Does it please you to believe I am afraid of you?

Young woman: My father is afraid of everybody.

ELIZA: What else comes to mind when you think of your father?

Young woman: Bullies.

ELIZA: Does that have anything to do with the fact that your boyfriend made you come here?

Not coincidentally, it also saw the discovery of the ELIZA Effect where, in the program’s author’s own words, “extremely short exposures to a relatively simple computer program could induce powerful delusional thinking in quite normal people”; an effect we continue to see today with ChatGPT and other LLMs.

You can try ELIZA here if you like. Compared to it's modern equivalents, you'll find it to be more terse, more inquisitive and much easier to trip up into being non-sensical.

Another example was SHRDLU: an AI that could carry on a conversation with a human in English, solve problems and manipulate objects in a simulated world. Here’s an excerpt from a real exchange with SHRDLU:

Person: Put the blue pyramid on the block in the box.

Computer: OK.

Person: Is there anything which is bigger than every pyramid but is not as wide as the thing that supports it?

Computer: YES, THE BLUE BLOCK.

Person: Does a steeple

Computer (interrupting): SORRY, I DON'T KNOW THE WORD "STEEPLE".

Person: A "steeple" is a stack which contains two green cubes and a pyramid.

Computer: I UNDERSTAND.

Person: Are there any steeples now?

Computer: NO.

Person: Build one.

Computer: OK.

Still impressive by today’s standards and certainly breathtaking for a computer program developed from 1968-1970.

As human nature has not changed in the intervening period, the reactions of lay-people (shock) and practitioners (wild predictions and internal turf wars), along with the ELIZA effect, very much mirror what we see today. The hype also sounds eerily familiar:

- "Machines will be capable, within twenty years, of doing any work a man can do." - H.A. Simon, 1960

- "In from three to eight years we will have a machine with the general intelligence of an average human being." - Marvin Minsky, 1965

- "[The Mark I Perceptron is] the embryo of an electronic computer that [the Navy] expects will be able to walk, talk, see, write, reproduce itself and be conscious of its existence." - Frank Rosenblatt, 1965 (In fairness to him, he was partially misquoted, but it captures the narrative of the day)

However, progress ultimately stalled. While the scientists of the day were adept at creating a software implementation of reason, they rapidly discovered that pattern-matching tasks like recognizing a face were surprisingly difficult; far more so than solving formal theorems. Indeed, the problems were intractable for the day.

These early practitioners also discovered just how much data would be required to make intelligent systems work, starting with the idea that AIs would only need to know as much as a child to be useful.

They immediately found, however, that a child actually knows an incredible amount; birds are small animals that fly, have beaks and eat worms, except that is not true of all birds, etc. Large amounts of messy information with exceptions to exceptions to exceptions.

Formalizing commonsense knowledge and reasoning into a system, especially given the tools of that era was effectively impossible.

First AI Winter

Ultimately, when performance did not match expectation, at all, government funding dried up and the first AI boom came to a close in 1974.

During the following period, known as the first AI Winter, a number of important ideas were quietly developed, most of which now act as the basis of current systems.

Arguably, chief amongst these developments were advances in simulating intelligence through the use of artificial neurons, building on Pitts and McCulloch's pioneering work; a school of thought that became known as connectionism.

At the time, connectionism was something of the discipline's black sheep. Symbolic AI, logic and rules about how to think, programmed by humans, was ascendant and was the basis of almost all the achievements of the day.

This led to the development of a kind of tribalism within the discipline. Scientists are still human, after all. The symbolic AI proponents dumped all over their connectivist colleagues at every turn, engendering resentment and grudges that still shape the conversation about AI today. Consider Geoffrey Hinton's strident anti-symbolic attacks.

That era's connectivist work, however, is the foundation of almost everything happening in the industry today—especially a technique known as “backpropagation,” a method for efficiently training neural networks by automatically adjusting their internal settings until they got the right answer.

Symbolic AI research continued as well, and by the late ‘80s some successes were made in the form of “expert systems”, which concentrated on narrow fields of specialist knowledge and effectively digitized human experience.

This neatly avoided the scale of common sense problems and allowed them to create useful systems in various fields such as medical diagnosis and mass customization.

Second AI Boom

These developments, along with the massive Japanese “Fifth-Generation Computer” initiative, brought not only government interest back to AI but also drew significant attention from the corporate world. The second boom had begun, less than a decade after the first fizzled out.

Numerous promising systems were created in the second boom, most notably XCON which saved it’s maker tens of millions of dollars a year configuring computer systems and CADUCEUS, a system designed to assist doctors and was capable of diagnosing over 1,000 diseases even in very complex circumstances (overlapping symptoms and incomplete data). They were the Siri of their day, but limited to very narrow disciplines.

However, as the ‘90s began, the promise of the technology once again failed to meet expectations and the second AI boom came to an end.

Second AI Winter

In the second AI Winter that followed, there was a shift in focus: from formal reasoning to the application of knowledge as the informal definition of intelligence, advances in robotics and the idea of embodied reason (“intelligence can’t really blossom until the AI has a body”), fuzzy logic (dealing with problems that aren’t neat and tidy 0s and 1s) and reinforcement learning (trial and error exercises). As neural AI was still a marginal discipline, the underlying technology used was still symbolic AI, later dubbed “GOFAI” (Good Old-Fashioned AI) to distinguish it from neural and statistical methods.

For 12 years or so, various AI splinter technologies continued to be used and make small advancements behind the scenes, often deliberately being hidden or using less toxic names such as “informatics” and “knowledge-based systems”. One form, known as “statistical AI” became prevalent during this period, making useful contributions to solving problems such as spam filtering and data mining (“Bayesian” was a common buzzword of the day).

The long thaw

Starting around 2005, the rise of the Graphics Processing Unit, or GPU, along with advances in storage technology started to allow useful experimentation with the connectionist ideas of the ‘80s. GPUs are specialized chips that focus on solving linear algebra equations. Such equations are not only the foundation of the graphics found in games and movies, but also neural AI.

Early in their training, AI models rely on a technique known as “supervised learning” which uses a carefully prepared dataset for which the correct answers are already known (like a classroom textbook). Thus, quality datasets with which to train from are extremely important, but also very costly to produce. This period saw the development of ImageNet, a database of 14 million images annotated by hand across 20,000 categories by a global team of 50,000 workers, checking the annotations 3 times each.

The availability of ImageNet led to the 2012 development of AlexNet, which was far and away the most accurate visual model of its day. This accomplishment led to widespread interest in academia and corporate research groups, particularly for the long-neglected field of neural AI. Shortly afterword, facial recognition features began appearing in the now ubiquitous smart phone.

Google was a natural fit to help move AI forward. The principal problem of common knowledge is that it not only requires a colossal amount of data, but it must also be possible to very rapidly and correctly *search* that data, otherwise it is useless. Indeed, Google’s technology has, since day one, been based on the work of earlier AI scientists trying to solve this very problem.

That’s why, in 2017, a group of eight scientists at Google published a paper called “Attention is All You Need”. While it did not make waves outside of a portion of the AI community, it was the first principal advance in neural AI since the ‘80s. They called the central mechanic the “transformer”.

It solved two major problems. The first was that large networks were very hard to train because the training signals being used to train a model gets weaker and weaker the farther it goes through the simulated neurons. The architecture solved this problem, allowing for much larger and much more capable models to be developed.

The second problem was sorting out the ordering of words and which words were important in combination with other words, even if they are not close to each other in the sentence. Prior to this, “Man bites dog” and “Dog bites man” would have the same meaning to an AI model. This is a problem that is obvious to see, but very hard to teach a collection of artificial neurons how to do properly.

Needless to say, this new architecture dramatically improved the accuracy and understanding of the AI models.

The third AI boom

Much of what we see happening in the world today is the direct result of Google's attention paper, including the memorable introduction of ChatGPT to the world, which sparked the boom which we are now experiencing.

Much as in the '60s, there is a lot of hype and misinformation about an opaque but impressive technology. As this series continues, we will demystify AI technology, look at how it can be used, and map out some likely future directions for this iteration of thinking machines.