AI Part 3: Human intelligence, the model for AI

Cognitive psychologists recognize different modes of processing in human thinking: automatic, intuitive/heuristic and deliberate/analytical.

The automatic represents the functions that happen below the awareness of the person in question, such as most of the vision pipeline (we experience the end of that process, but not everything that goes into it), reflexes, pupil dilation, etc.

Note that heartbeat and many body-related functions are handled locally; your heart has its own simple neural network that causes it to beat; otherwise the hearts of quadriplegics would stop.

The automatic mode is sometimes called the "hind-brain" in colloquial usage, which somewhat maps with the anatomical "hind-brain", although imperfectly.

Intuitive/heuristic, our instinctive mode, is where most humans spend the overwhelming bulk of their lives, and represents the limit of brain function for the vast majority of other creatures.

It is a fast, reactive system that quickly searches our personally accumulated knowledge to match inputs, balancing the various results to arrive at the next action. It is extremely efficient, aggressively filtering out unimportant stimuli and responding only to changes in the remainder. It represents the part of our brain that governs instinct and intuition.

A good example of this behavior is when walking through a busy pedestrian intersection while speaking with a friend. You instinctively navigate a complex environment with numerous obstacles (people) moving in different directions, at different speeds, and with different expected awareness that can be judged by the individual properties of each person (kids, elderly, drunk people, inattentive people, etc), all while formulating your opinion about something your friend is telling you and updating that opinion with every word they speak.

This feels trivial only because our brains are so well adapted to it. It’s much harder to teach that to a computer.

Intuitive/Heuristic is sometimes referred to as "mid-brain" thinking, but this kind of processing is spread somewhat evenly throughout the brain as a whole, rather than the relatively small area that officially constitutes the "mid-brain" anatomically.

Deliberate/analytical, our reasoning mode, is much slower and much less energy-efficient than Intuitive/Heuristic. This area of the brain demonstrates abstract reasoning and through introspection, the crucial ability to re-program the instinctive brain (adaptability). This is what separates us and a select number of other species from ordinary animals.

Primates, cetaceans (dolphins, whales), octopi, elephants, pigs, and corvids (crows, ravens, etc) also possess this reasoning capability, although more limited in their sophistication.

This modality is often expressed as "fore-brain" thinking in common conversation; a description which does reasonably overlap anatomically with where such activity actually occurs.

Speaking with a very broad brush, this part of the brain tends to be less frequently engaged by those who work outside abstract professions (e.g. legal, engineering, medical, science, etc) which aligns with the survival mechanic that minimizes energy use.

This is not to say that the "mid-brain" by itself is not capable of sophisticated behavior: a leopard can know that it needs food, decide to go hunting, evaluate a herd, formulate intent and a plan of action to carry it out. It’s the difference between “cunning” (intuition) and “book smarts” (reasoning).

It also explains why teenagers often slowly think things through when presented with new ideas, while adults, when presented with the same information, will make a snap judgement. The adults can do this because they have already either a) been sufficiently exposed to the pattern to formulate an intuitive reaction, or b) have learned an existing reactive pattern from others.

In the case of the latter, such adults may become frustrated because the answer should be instant and “obvious” to the teenager, even if the adults themselves can’t express why.

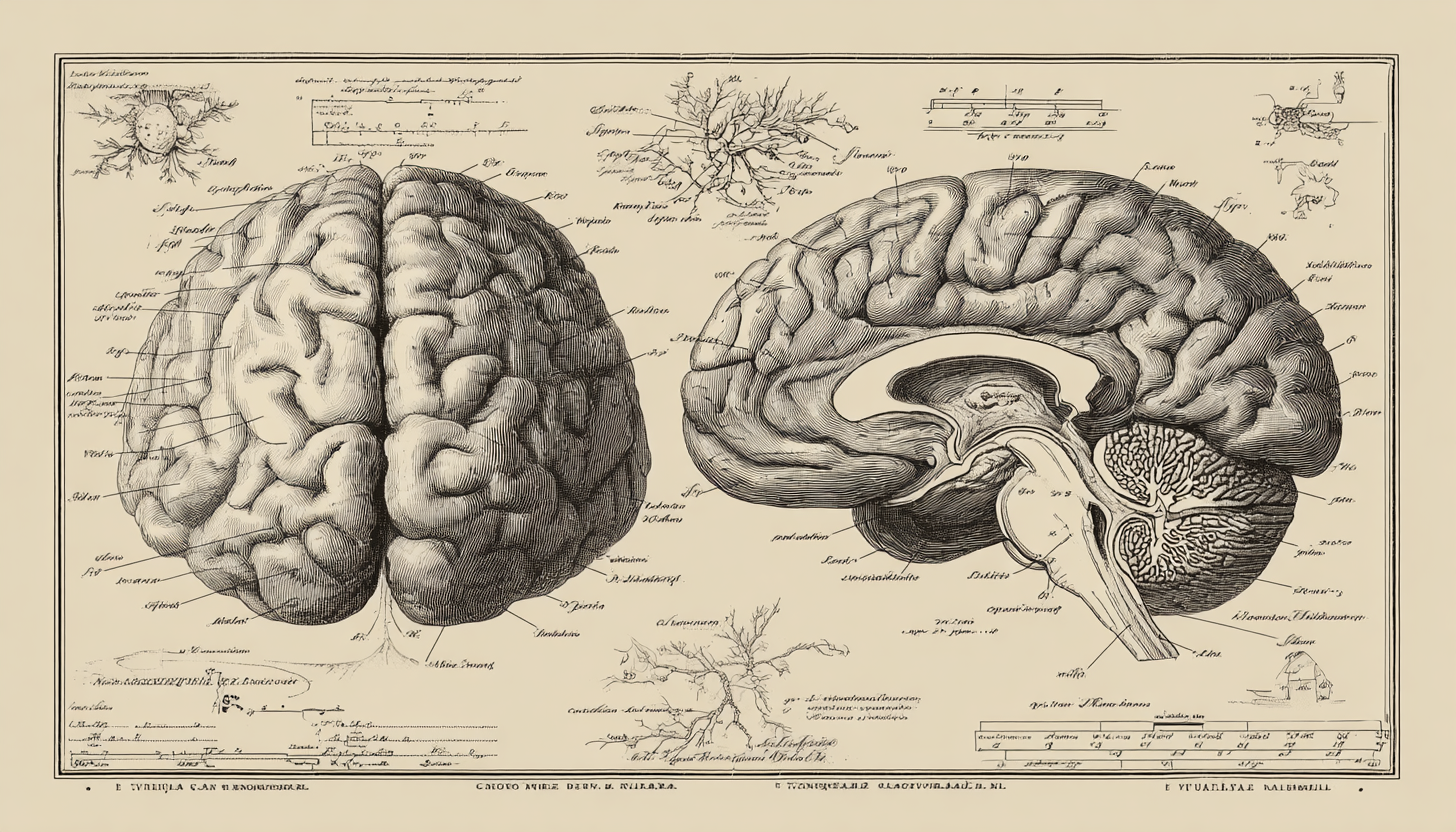

At the base of all of this is the humble neuron. It is the basic building block from which we can construct any number of useful structures: a simple wire to transmit signals, the timer that makes our hearts beat, a visual cortex to find edges, shapes and objects in the field of view, facial recognition, memory storage, reasoning, etc. It is to the brain what the transistor is to the computer.

Human and artificial intelligence building blocks

The transistor is the fundamental building block of every single thing that happens in a computer; it is the “primitive” to use the jargon. We combine transistors into standard assemblies called logic gates, and then we combine logic gates into standard assemblies which represent a single operation, like addition or storing a bit of memory.

We continue combining these lower-level assemblies, layer-upon-layer into increasingly abstract higher-level components such as sending bytes across the network or a widget that plays videos.

This is also true of the structure of our brains. Many simple patterns are repeated again and again as they are the most efficient arrangement of neurons to carry out a specific operation. These structures are combined into more complex and abstracted layers until we arrive at functional units like the cerebellum or parietal lobe.

This pattern also appears in how information gets processed by both our brains and neural networks. The best example is human/computer vision.

Human vision starts with nerve connections to each of the cones in our eyes, which are then assembled into the colored picture elements. This includes purple which, as an aside, is not a real color in the physical sense but is something our brains make up to reconcile the presence of red and blue wavelengths at the same point.

It then finds edges in the resulting picture, combines those edges into shapes, and the shapes into objects, identifies those objects generally, a man and a cat, and then finally identifies them specifically as “Bob and Tickles”.

Computer vision models and multi-modal models (those that are capable of working in more than one media, such as text and pictures) work very similarly.

First, colored dots are captured with a camera (picture elements or pixels), whose color values are modeled after the red, green and blue sensing cones in our eyes. Then these color values are processed through the AI model, layer-by-layer, in increasing levels of abstraction (pixels -> edges -> shapes -> objects) finally arriving at “a man and a cat” if not “Bob and Tickles”.

One is implemented in terms of biological material and the other in a rough software simulations of the same, underwritten by hardware and traditional programming. In both cases, the progression from simple to complex gives rise to both form and function.

Relative Complexity

It should be stressed however, that artificial intelligence as it exists today is extremely simplistic compared to its biological counterpart.

The human brain contains hundreds of different kinds of neurons, hundreds of different kinds of synapses, tens of different kinds of dendrites (trees of connections from the synapses to the neuron's core: the soma) and a number of different neural architectures (the patterns of how these components are wired together).

Our brains possesses hundreds of trillions of components, each of which differing in their physical composition (thickness, length, material, neurotransmitter concentrations, etc) and their inter-connections, all of which contribute to our efficient computation.

This is to say nothing of the glia cells, which were thought to be merely scaffolding or support structures for neurons, but are increasingly regarded as playing a role in cognition. There are about as many glia in the brain as neurons.

By comparison, a top-flight AI contains far fewer and much simpler components; approximately one trillion of essentially one kind of neuron and one kind of synapse in a limited number of configurations. Compared to many technologies AI models look very complex, but compared to the brain, they look simple.

Since an AI uses principles derived from biological brains, it shouldn't come as a surprise that the artificial version should exhibit similar behaviours to their inspiration. Indeed, that is the point of all simulations.

But it should also not come as any surprise that a highly simplified model that expresses only a fraction of the originals complexity will have non-trivial gaps in capability and efficiency. It takes megawatts of power to train an run an AI, but the brains of their creators cruise along at 20W; about as much as an LED light bulb.